AI bias audit

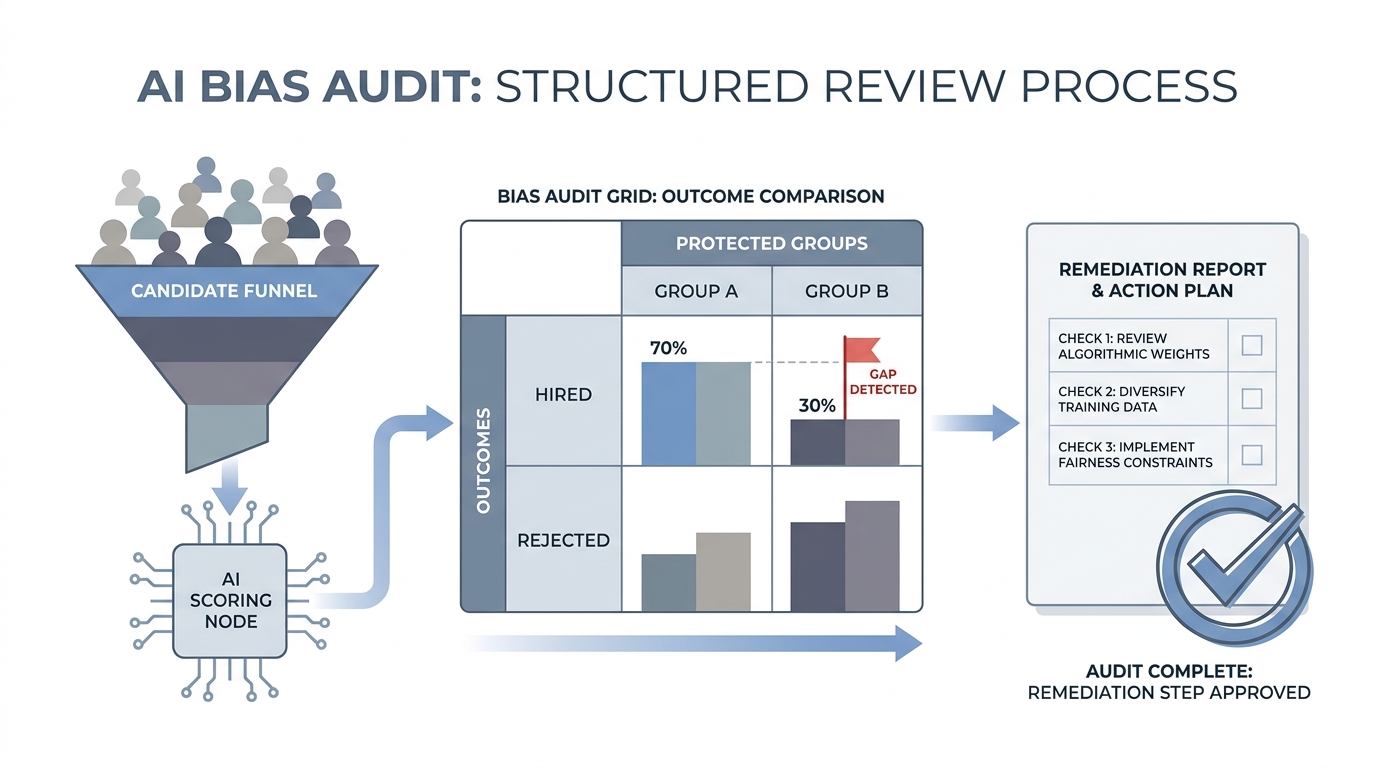

A structured review of an AI tool's inputs, logic, and outputs to detect patterns that disadvantage protected groups in hiring decisions before those patterns cause legal or reputational harm.

Michal Juhas · Last reviewed May 3, 2026

What is an AI bias audit?

An AI bias audit is a structured review of a hiring tool's inputs, logic, and outputs to detect patterns that produce worse outcomes for protected groups: women, racial minorities, older candidates, people with disabilities, or others covered by employment law. Unlike a one-time sign-off before launch, a useful bias audit is a repeating process tied to the hiring funnel the tool touches.

The audit does not require a data scientist to start. A spreadsheet showing pass rates by protected group at one funnel stage is already an audit. What it requires is a clear owner, a regular cadence, and vendor cooperation when the problem lives in training data rather than the outputs your team controls.

In practice

- A TA ops lead exports 90 days of AI-scored resume decisions, splits them by gender using EEO self-report fields, and finds that profiles from women pass at 71% versus 89% for men. That table is the beginning of a bias audit and a legal conversation.

- A vendor sends over a model card when asked and it shows training data from 2018 to 2022, a period when fewer women were promoted in the industry the model was calibrated on. The training gap is the audit finding, not just the output gap.

- An HR partner at a Tuesday debrief asks "when did we last run a bias check on the screening tool?" and nobody knows. That is the most common audit failure in practice.

Quick read, then how hiring teams use it

This is for recruiters, sourcers, TA, and HR partners who need the same vocabulary in debriefs, vendor calls, and policy reviews. Skim the first section when you need a fast shared picture. Use the second when you are deciding how it shows up in your stack, contracts, or quarterly compliance calendar.

Plain-language summary

- What it means for you: Someone needs to look at whether the AI your team uses treats applicant groups differently. If no one is doing that, you are the first person to notice when legal or press asks.

- How you would use it: Pull your last quarter of AI-scored or AI-filtered candidates, split by protected group using EEO fields, and check whether any group consistently scores lower or gets rejected at a higher rate.

- How to get started: Ask your ATS vendor for a decision log export. Ask the AI vendor for their most recent bias audit summary or model card. Name who will review both documents before you go live with a new tool.

- When it is a good time: Before signing a new vendor contract, before each model update reaches production, and at least once a quarter on any tool that touches the shortlist or offer stage.

When you are running live reqs and tools

- What it means for you: Every AI ranker, filter, or score that moves candidates in or out of the funnel is a selection procedure under EEOC guidance. Group-rate monitoring is the minimum; tracing bias back to training data or proxy features is the full audit.

- When it is a good time: Before vendor contract signature, after any model update, and quarterly on production tools. A spike in rejection rates for one req type or one demographic segment is a signal to run the check immediately.

- How to use it: Log candidate IDs, stage decisions, model version, and timestamp together. Run pass-rate ratios by protected group quarterly. Keep findings with owner names and review dates. Cross-link to adverse impact thresholds so your reporting connects to the legal standard.

- How to get started: Ask current vendors for their bias audit results and which demographic groups they tested. If no audit exists, that is your first compliance conversation. Add an audit clause to new vendor contracts before you are legally required to.

- What to watch for: Proxy features (zip code, school prestige, employment gaps) that correlate with protected class. Small-sample results that look clean but are statistically meaningless. Model updates that change scoring without notifying your compliance team. Vendors who conflate "we tested for gender" with "we tested all protected classes."

Where we talk about this

On AI with Michal live sessions, AI bias audits come up in the AI in recruiting track alongside adverse impact because both require the same funnel data and similar calculation skills. We walk through a simplified group-rate table on anonymized data, review a sample model card from a real vendor category, and practice the one-page audit memo that compliance teams need before you sign. If you want the full conversation with peers who bring real vendor contracts, start at Workshops.

Around the web (opinions and rabbit holes)

Third-party creators move fast. Treat these as starting points, not endorsements, and double-check anything before you wire candidate data.

YouTube

- Navigating Employment Discrimination in AI and Automated Systems: A New Civil Rights Frontier (U.S. EEOC) is the agency's January 2023 public hearing on automated systems in hiring and selection decisions.

- Navigating AI Compliance: Employer Best Practices Pt. 2 (Weintraub Tobin) covers internal AI policies, privilege-aware bias audits, and how employers document findings when tools touch hiring and screening.

- What is the New York City Bias Audit Law (Local Law 144)? (Holistic AI) walks through NYC automated employment decision tool rules, independent bias audits, and compliance checkpoints teams use before go-live.

- r/humanresources threads on "AI screening bias" and "bias audit" mix practitioner questions with compliance team experience from real debrief rooms.

- r/recruiting has recurring threads on AI resume screeners where bias risk comes up alongside vendor comparisons and candidate experience concerns.

- r/AIethics covers audit frameworks, fairness metrics, and research papers for practitioners who want to go deeper than the pass-rate table.

Quora

- Searching "AI bias audit hiring" on Quora surfaces HR practitioners and researchers explaining the gap between technical fairness metrics and legal adverse impact standards (read critically; quality varies by contributor).

AI bias audit versus adverse impact analysis

| AI bias audit | Adverse impact analysis | |

|---|---|---|

| Scope | Inputs, logic, training data, and outputs | Observed outcome gap at one funnel stage |

| Method | Feature review, model card review, group-rate analysis | 4/5ths rule calculation on selection decisions |

| When to run | Before launch, after each model update, quarterly | Quarterly, plus any time rejection rates spike |

| Legal driver | NYC LL 144, EEOC AI guidance, EU AI Act | EEOC Uniform Guidelines, Title VII, ADA |

| Owner | TA ops and legal or compliance partner | TA ops and legal or compliance partner |

Related on this site

- Glossary: Adverse impact, Human-in-the-loop (HITL), Hallucination, Structured output, Scorecard, AI adoption ladder

- Blog: AI candidate screening, How to use AI in recruiting

- Guides: Hiring managers

- Live cohort: Workshops

- Membership: Become a member