Employment skills assessment

A structured test or work sample that measures whether a candidate can perform the specific tasks a role requires, graded against documented criteria before a hiring decision is made.

Michal Juhas · Last reviewed May 5, 2026

What is an employment skills assessment?

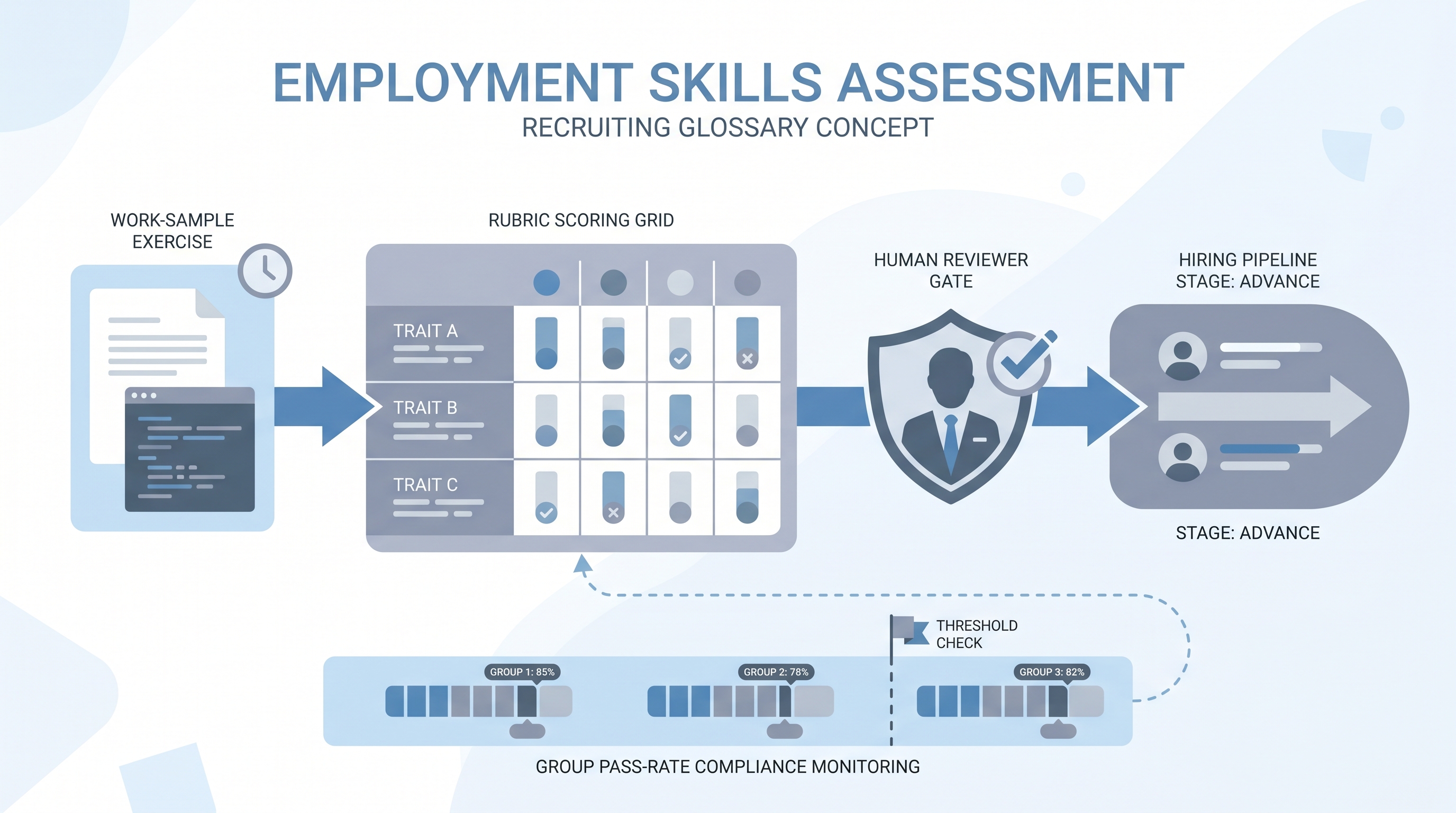

An employment skills assessment is a structured exercise that asks candidates to complete actual job tasks or realistic simulations, then scores the output against documented criteria. The category covers coding challenges, written briefs, data analysis exercises, case studies, and role-play calls graded with a shared rubric. The distinguishing feature is specificity: the task should mirror work the successful hire will do in the first 90 days, not a proxy for general intelligence or personality.

Unlike cognitive ability tests, which measure how fast a person processes abstract problems, skills assessments measure whether someone can produce the actual output the role requires. That makes them easier to explain to candidates ("here is a real problem the team works on") and often easier to defend in bias reviews because the connection to job requirements is direct rather than inferred.

In practice

- A talent team that sends a 2-hour written brief before a first interview and grades it against a rubric is running a skills assessment, even if they never called it that and keep the graded copy in a shared folder rather than an integrated platform.

- Engineering teams often call the same exercise a "take-home" or "code review" without framing it as an assessment, but the validity questions are identical: does the scoring rubric reflect the actual bar the team uses, and does anyone track pass rates by demographic group?

- When a TA ops person says "the take-home correlates with performance at 90 days," they are describing validity evidence, typically tracked through hire quality reviews rather than a formal criterion validity study.

Quick read, then how hiring teams use it

This is for recruiters, sourcers, TA, and HR partners who need the same vocabulary in debriefs, vendor calls, and policy reviews. Skim the first section when you need a fast shared picture. Use the second when you are deciding how it shows up in the pipeline, ATS, and compliance workflow.

Plain-language summary

- What it means for you: Instead of asking how smart a candidate is, you give them a short piece of real work and score the output against a rubric, the same way the team would review a colleague's deliverable.

- How you would use it: Pick a task that takes 60 to 90 minutes, mirrors something on the team's actual backlog, and can be graded on three to five criteria a hiring manager and recruiter both agree on.

- How to get started: Write down the two or three criteria you would use to review this work if a current employee handed it in, turn those into a simple rubric, and pilot with two internal reviewers scoring the same sample before live candidates see it.

- When it is a good time: After the job requirements are stable, when you can run the task in under two hours without a live technical environment, and when you have at least two people available to calibrate scoring before launch.

When you are running live reqs and tools

- What it means for you: Skills assessments create scored candidate data that must sit in your ATS or a GDPR-compliant platform, not in a recruiter's inbox. The scoring rubric becomes a legal artifact once it drives hiring decisions.

- When it is a good time: When the role has a clear deliverable, when volume justifies the overhead of rubric calibration, and when your compliance team has reviewed the task for adverse impact risk before launch.

- How to use it: Wire the assessment invite to an ATS stage trigger, route completed submissions to a review queue with a clear owner, and store scores in a field that maps to your GDPR retention schedule. Check group pass rates before the first cohort finishes. See employment assessment tools for the platform layer.

- How to get started: Run one small pilot with 10 to 15 candidates before committing to a vendor. Score every submission manually the first time to understand where the rubric breaks down, then revisit automation only after calibration is reliable.

- What to watch for: Scope creep (take-homes that balloon beyond two hours), scorer disagreement (low inter-rater reliability signals a weak rubric), missing deletion paths (can your ATS trigger a GDPR purge on the scoring platform?), and AI scoring without model version logging. See adverse impact for the group pass-rate calculation.

Where we talk about this

On AI with Michal live sessions, skills assessment shows up in two tracks. The AI in recruiting blocks cover structured hiring design: how to brief a task, calibrate a rubric with a hiring manager, and connect the output to a shared scorecard without a manual copy-paste step. The sourcing automation blocks add the operational layer: ATS-triggered invites, score webhooks, and deletion cascade testing. If you want the full room conversation, not just this page, start at Workshops and bring your actual take-home materials and ATS names.

Around the web (opinions and rabbit holes)

Third-party creators move fast. Treat these as starting points, not endorsements, and do not copy assessment tasks from stranger scripts that move candidate data into unreviewed platforms.

YouTube

- How to Design a Skills-Based Hiring Process (LinkedIn Talent Solutions) is a practical walkthrough of building skills-first job criteria before you write a test.

- Work Samples and Assessment Centers is an academic overview from IO psychology that explains why work samples predict performance and where they fail.

- Skills-Based Hiring with AI Tools covers how recruiters are combining AI-scored exercises with rubric-based review in modern TA teams.

- Skills-based hiring, anyone doing it? in r/recruiting is a frank discussion of what actually works and where rubric calibration falls apart on small teams.

- Take-home assignments: how long is too long? in r/cscareerquestions is the candidate-side view of scope creep that TA teams often underestimate.

- Any good resources on building better technical assessments? in r/recruiting gathers practitioner links on assessment design.

Quora

- What is a skills assessment in the context of employment? collects hiring manager and recruiter perspectives on when they work and when they backfire (read critically).

Skills assessment versus cognitive test

| Dimension | Skills assessment | Cognitive ability test |

|---|---|---|

| What it measures | Can produce specific work output | General information processing speed |

| Predictive validity | High for the specific role | High across many role families |

| Adverse impact risk | Lower for many groups | Higher on average across studies |

| Candidate time | 60 to 120 minutes | 15 to 30 minutes |

| Calibration effort | High: rubric needs training | Low: standardized scoring |

| Legal defensibility | Direct job relevance evidence | Requires validity study for the role |

Related on this site

- Glossary: Candidate assessment tools, Employment assessment tools, Employment assessment test, Adverse impact, Scorecard, Async screening, Human-in-the-loop

- Blog: AI sourcing tools for recruiters

- Live cohort: Workshops

- Membership: Become a member