AI-assisted interview feedback in the ATS

Using AI to draft, structure, or summarize post-interview notes directly inside the ATS, so feedback reaches the debrief with consistent fields and less lag between the interview and the review meeting.

Michal Juhas · Last reviewed May 4, 2026

What is AI-assisted interview feedback in the ATS?

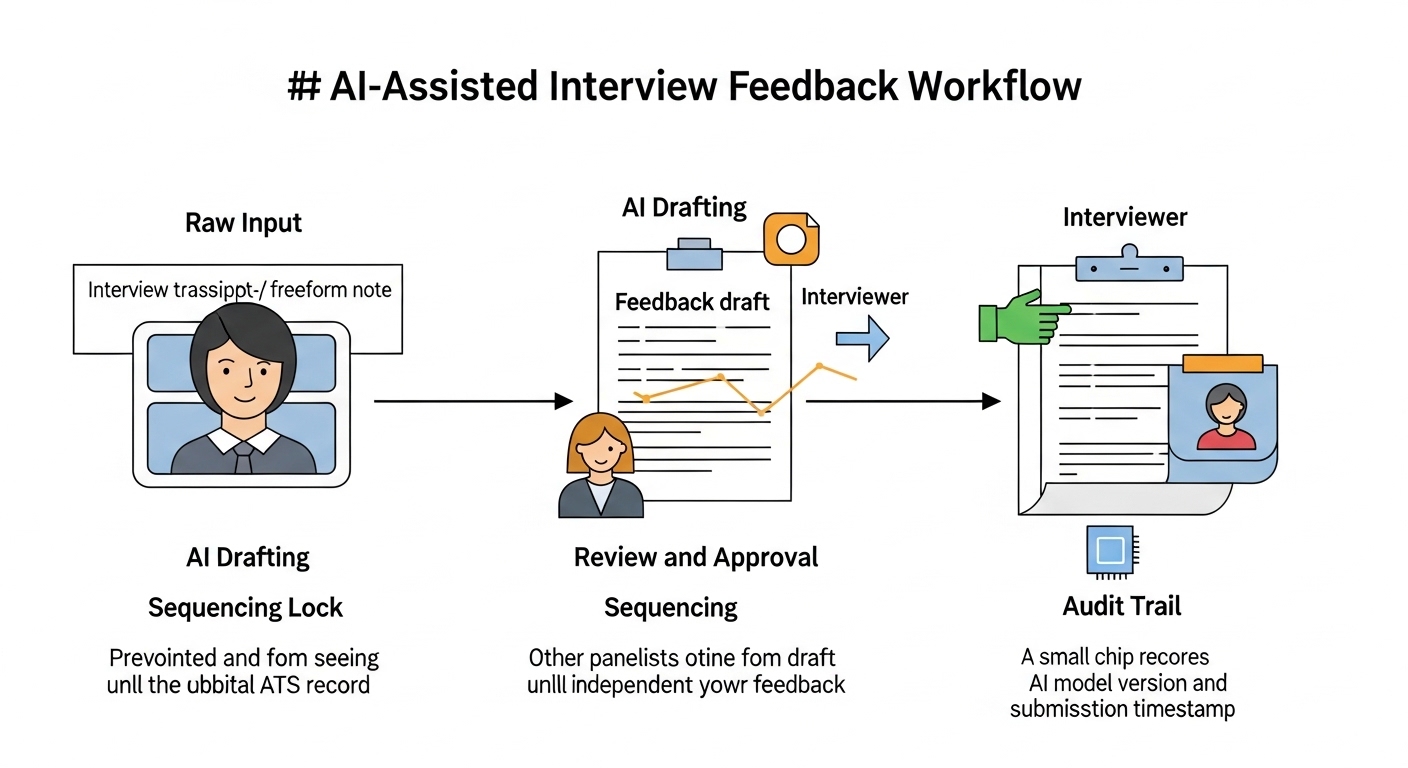

AI-assisted interview feedback means the ATS uses a transcript or interviewer notes as input, drafts a structured feedback record against your scorecard criteria, and routes it to the interviewer for review before the debrief. It compresses the window between the interview and the hiring decision without removing human authorship from the record.

In practice

- A recruiter notices that one hiring manager always submits feedback three days late and in inconsistent formats. AI drafting cuts submission time to under 30 minutes by giving the manager a structured draft to edit rather than a blank form to fill.

- A panel of five interviewers uses an AI tool to draft notes from individual transcripts. The ATS hides all drafts until every panelist has reviewed and submitted their own version, preventing the first submission from anchoring the rest.

- During a bias audit, a TA ops lead discovers the AI draft consistently used stronger language for candidates from one university cohort. The pattern came from the training data, not the interviewers. The fix was adding an explicit neutrality instruction to the system prompt.

Quick read, then how hiring teams use it

This is for recruiters, sourcers, TA, and HR partners who need the same vocabulary in debriefs, vendor calls, and policy reviews. Skim the first section when you need a fast shared picture. Use the second when you are deciding how AI feedback fits your scorecard, your ATS configuration, and your compliance posture.

Plain-language summary

- What it means for you: Instead of typing feedback from scratch after every interview, you get a structured draft tied to your scorecard criteria. You edit, approve, and submit. The ATS records the final version as yours.

- How you would use it: Connect your transcription or note-taking tool to the ATS. Set a feedback template that mirrors your scorecard. Review the AI draft against your memory of the interview and correct anything the model got wrong.

- How to get started: Start with one role and one panel. Run both AI-assisted and manual notes in parallel for the first five candidates. Compare quality, time saved, and any scoring gaps before expanding.

- When it is a good time: When your panel consistently submits late or in wildly different formats, and when you already have a shared scorecard that defines what good evidence looks like for each criterion.

When you are running live reqs and tools

- What it means for you: AI feedback drafts change data lineage in your ATS. The audit trail now includes model version, input source, and who approved the final note. That is useful for compliance and problematic if not documented.

- How to use it: Gate visibility so no panelist sees another's submission until all have completed. Set the LLM context to include the scorecard criteria explicitly. Flag low-confidence transcript segments for manual review rather than silently including them in the draft.

- How to get started: Map your current feedback bottlenecks before wiring automation. If the problem is late submission, AI drafting helps. If the problem is poor calibration on what good looks like, fix the scorecard first.

- What to watch for: Hallucinated quotes attributed to candidates, panel anchoring when drafts are visible too early, and model drift when a transcription vendor updates their engine without notice.

Where we talk about this

On AI with Michal live sessions, AI-assisted interview feedback comes up in the AI in recruiting track when discussing how AI fits into the evaluation pipeline without replacing human judgment. The full debrief sequencing conversation, including anchoring risk and scorecard alignment, is a recurring live discussion. Start at Workshops and bring your current scorecard and ATS name.

Around the web (opinions and rabbit holes)

Third-party creators move fast. Treat these as starting points, not endorsements, and double-check anything before you wire candidate data.

YouTube

- Search "AI interview feedback ATS" for vendor walkthroughs showing how tools like Greenhouse and Lever integrate with transcription and AI drafting.

- Search "structured interview feedback bias" for academic and practitioner sessions on how unstructured notes introduce inconsistency across panels.

- r/recruiting has threads on whether AI interview notes are trustworthy and how interviewers feel about having drafts prepared for them.

- r/humanresources covers compliance and disclosure questions that HR leaders raise when AI enters the evaluation record.

Quora

- Search "AI interview feedback recruiter" for a mix of practitioner and skeptic answers on whether AI-drafted notes hold up in hiring disputes.

AI-drafted versus manual interview notes

| Dimension | Manual notes | AI-assisted notes |

|---|---|---|

| Time to submit | Often 24 to 72 hours | Often under 30 minutes with a draft |

| Format consistency | Varies by interviewer | Consistent when scorecard is the template |

| Anchoring risk | Present if notes are shared early | Present if drafts visible before submission |

| Auditability | Relies on ATS timestamps | Includes model version and input source |

Related on this site

- Glossary: Scorecard, Human-in-the-loop (HITL), AI in recruiting, Structured output, Workflow automation

- Live cohort: Workshops

- Course: Starting with AI: the foundations in recruiting

- Membership: Become a member