California rules on AI in employment decisions

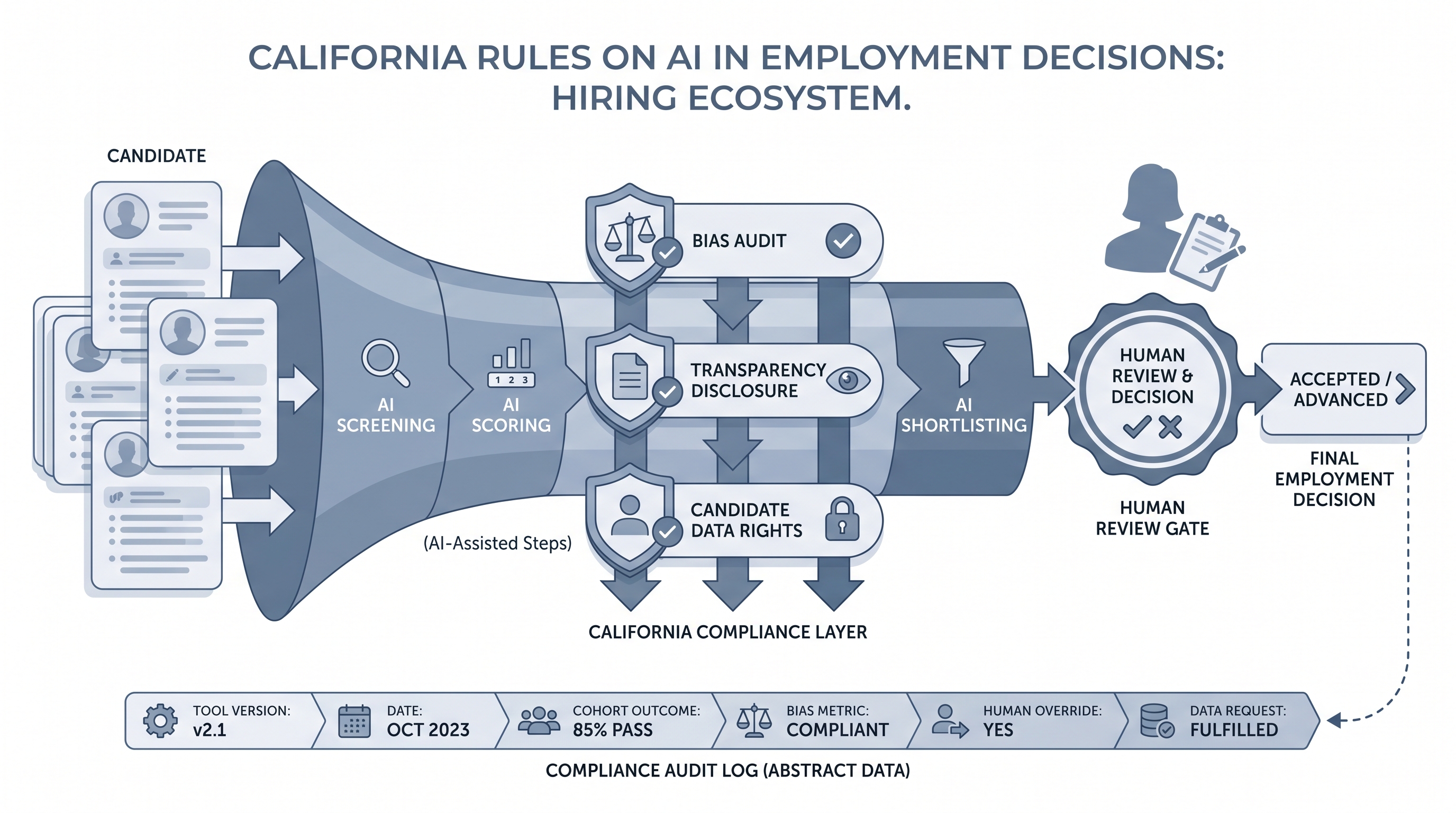

California state laws, CPPA draft regulations, and FEHA obligations that govern how employers use AI tools to screen, score, or rank candidates, including bias testing, data rights, and transparency requirements for automated decision-making.

Michal Juhas · Last reviewed May 5, 2026

What are California rules on AI in employment decisions?

California has more active AI employment regulation than any other US state. Three frameworks overlap for employers using AI in hiring: the Fair Employment and Housing Act (FEHA), the California Privacy Rights Act (CPRA) and its CPPA-led rulemaking on automated decision-making technology, and a growing set of legislative transparency requirements. Each framework places obligations on the employer, not the vendor. A vendor saying their tool is compliant does not transfer liability.

In practice

- A California-based tech startup running async video screening through an AI vendor still carries FEHA liability if that tool screens out more candidates from one protected group than another, even though the vendor built and runs the model.

- A recruiter might say "our vendor is EEOC-compliant" when no such certification exists, so vendor marketing language often covers a gap the employer must actually audit and document.

- Talent leaders at companies scaling quickly in California often discover during a compliance review that they added three AI tools in a year with no adverse impact logging, no vendor data processing agreement for candidate data, and no privacy notice update.

Quick read, then how hiring teams use it

This is for recruiters, sourcers, TA, and HR partners who need the same vocabulary in debriefs, vendor calls, and policy reviews. Skim the first section when you need a fast shared picture. Use the second when you are deciding how it shows up in the ATS, sourcing tools, or candidate communications.

Plain-language summary

- What it means for you: If you use any AI tool to filter, score, or rank candidates for a California role, you own the compliance. Vendor terms do not substitute for employer obligations under FEHA or CPRA.

- How you would use it: Run an annual adverse impact check on any AI-assisted stage. Update your candidate privacy notice each time you add a tool that touches applicant data.

- How to get started: List every tool in your hiring stack that uses AI in any way. For each one, confirm you have a data processing agreement, bias test results from the vendor, and a named internal owner.

- When it is a good time: Before your next vendor renewal or RFP, not after a regulator sends a letter.

When you are running live reqs and tools

- What it means for you: FEHA adverse impact applies at every AI-assisted stage: resume screening, async video scoring, Boolean-generated shortlists, and chatbot pre-screening. Run cohort outcome comparisons at least quarterly for high-volume funnels.

- When it is a good time: After adding any new AI feature to your ATS or sourcing stack, and before filing a response if a DFEH complaint surfaces.

- How to use it: Log hiring outcomes by stage and protected class using ATS exports. Apply the four-fifths rule to compare selection rates across groups. Escalate gaps above 20 percentage points to legal and HR ops immediately.

- How to get started: Pull last quarter's application-to-phone-screen conversion rates split by demographic, if your ATS captures it. If it does not, add that tracking before the next quarter closes.

- What to watch for: Vendors changing their underlying model without notice (look for version-change clauses in your MSA), CPPA rulemaking updates, and sourcing tools that enrich candidate profiles with social or third-party data that may include protected-class proxies.

Where we talk about this

On AI with Michal live sessions we work through real scenarios: which AI tools trigger California compliance obligations, how to read vendor bias testing claims critically, and when to loop in legal versus when a TA ops fix is enough. The AI in recruiting track connects FEHA and CPRA obligations to the day-to-day hiring stack. If you want to walk through a compliance inventory for your own tools, start at Workshops and bring your vendor list.

Around the web (opinions and rabbit holes)

Third-party creators move fast. Treat these as starting points, not endorsements, and double-check anything before you wire candidate data to a new tool.

YouTube

- Search "California AI employment law FEHA" on YouTube for employment attorney explainers on how FEHA applies to algorithmic decisions, released since late 2023 when EEOC guidance arrived.

- Search "CPPA automated decision-making rulemaking" for public CPPA board session recordings where staff walk through the draft ADMT regulations in detail.

- r/humanresources has recurring threads on AI screening tools where California-based HR teams share what their legal teams are telling them to audit.

- r/recruiting surfaces sourcer-level discussions on what vendors actually disclose about model training and how teams are handling bias audit requests.

Quora

- Search "California AI hiring compliance" on Quora for practitioner and attorney perspectives on FEHA and CPRA obligations (verify dates before acting on any answer, as rulemaking moves quickly).

California AI employment law at a glance

| Framework | Who it covers | Key obligation |

|---|---|---|

| FEHA | Employers with 5 or more CA employees | Adverse impact testing for AI-assisted decisions |

| CPRA / CPPA ADMT rules | Any employer processing CA resident data | Disclosure, opt-out, risk assessment (draft rules) |

| AB 2013 | AI developers, indirectly employers via vendor due diligence | Training data transparency |

| Federal EEOC guidance | All US employers | Title VII applies to algorithmic screening tools |

Related on this site

- Glossary: Adverse impact, AI bias audit, Human-in-the-loop (HITL), Candidate data enrichment, Personality test for employment, GDPR first-touch outreach

- Blog: AI sourcing tools for recruiters

- Live cohort: Workshops

- Membership: Become a member