AI-based recruiting

Structuring recruiting workflows so AI models handle high-volume repeatable tasks as the operational default: sourcing matches, outreach drafting, screen note summaries, and pipeline flagging, while recruiters own the judgment calls that require context no model reliably provides.

Michal Juhas · Last reviewed May 4, 2026

What is AI-based recruiting?

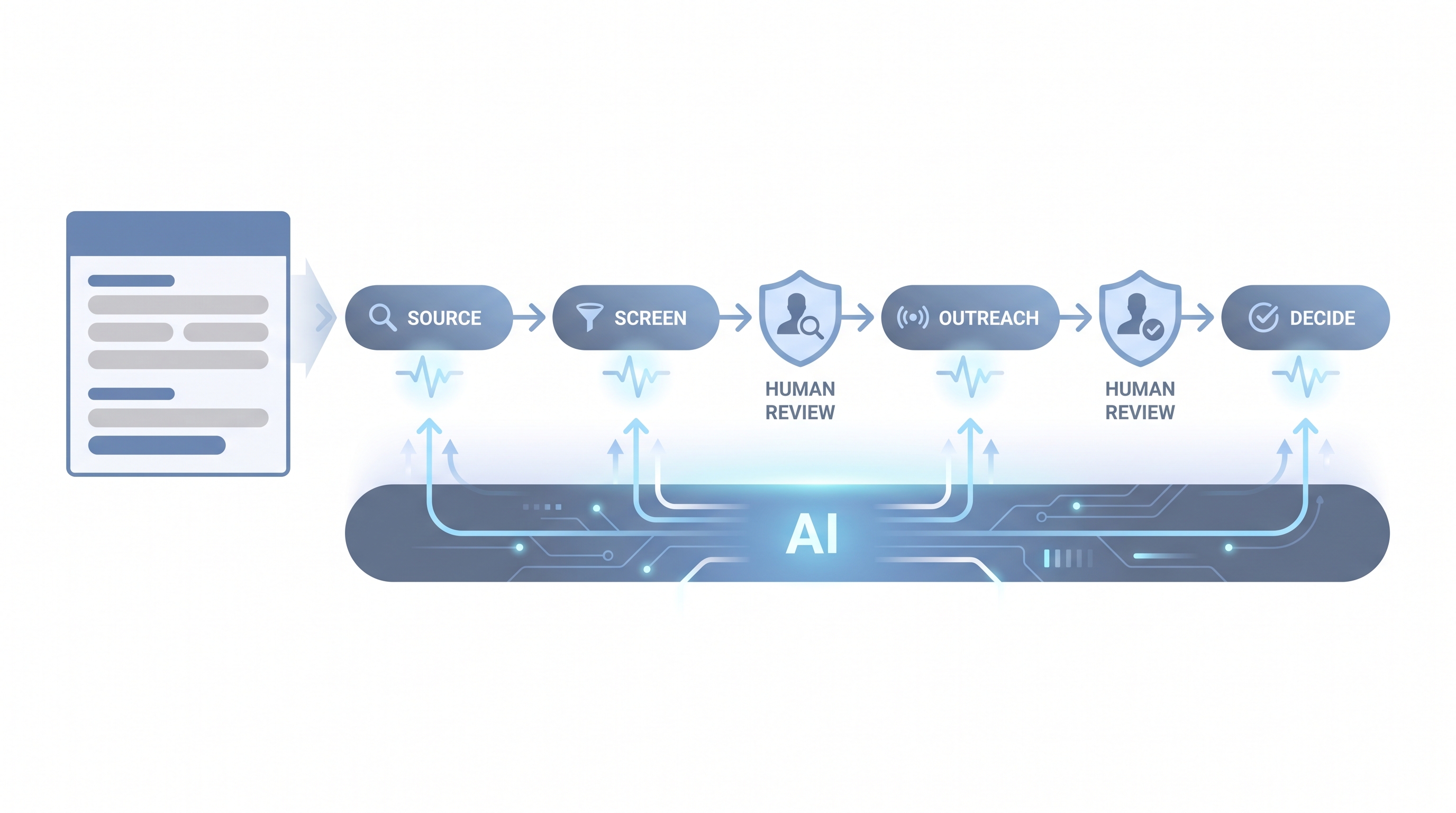

AI-based recruiting means building hiring workflows where AI models are the operational default for high-volume tasks, not an occasional add-on. Sourcing profile matches, drafting outreach variants, summarising phone screen notes into a scorecard, and flagging pipeline gaps are handled by AI first. Recruiters step in where the task requires judgment, candidate relationship context, or a read on dynamics no model reliably handles.

The distinction matters because it changes how teams design processes. Instead of asking "should we use AI for this?", the question becomes "what does this task look like when AI runs it at volume, and where do we put the human gate?" That shift in design logic is what separates AI-based recruiting from casual tool adoption.

In practice

- A TA ops manager at a 300-person scale-up describes their sourcing workflow as "AI-based" because every outreach message starts with a prompt against a saved role brief, every phone screen produces a structured note the AI fills from the recording, and the recruiter reviews both before they go anywhere. No step starts manually.

- A hiring manager asks the team "is this AI-based recruiting or just ChatGPT?" when a batch of identical-sounding InMail messages triggers candidate complaints. The answer separates a tool swap from a workflow with a review gate.

- A TA leader preparing a board presentation describes AI-based recruiting as a capability: the team runs 40 active reqs with the same headcount because sourcing and screening drafts no longer compete for recruiter attention with pipeline reporting.

Quick read, then how hiring teams use it

This is for recruiters, TA leads, and HRBPs who need a shared definition before writing a policy, running a pilot, or evaluating a vendor. Skim the first section for a fast shared picture. Use the second section when you are deciding which task to start with and what review gates to build.

Plain-language summary

- What it means for you: AI handles the draft, flag, and summarise tasks that run the same way dozens of times a week. You own the decisions that depend on knowing the hiring manager, reading the room, or catching what a model cannot see.

- How you would use it: Map the three most repetitive tasks in your current week. Write one prompt for each. Test against five closed roles. Measure how much editing the output needs. Only automate after rework is under 20 percent.

- How to get started: Start with one internal-facing task, not a candidate-facing send. Screen note summaries or pipeline status drafts are low-risk. Document the prompt, the expected output format, and who reviews before you wire anything to the ATS.

- When it is a good time: After your hiring process is stable enough to describe in one page. AI-based recruiting amplifies what already works; it multiplies noise when the process shifts every Monday.

When you are running live reqs and tools

- What it means for you: AI-based recruiting means candidate PII moves through model APIs, vendor tools update model versions without notice, and every automated output carries risk if the review gate is missing or skipped under deadline pressure.

- When it is a good time: Before a high-volume campaign or after a bottleneck appears in screening speed or outreach quality that adding headcount alone cannot fix.

- How to use it: Log model version, prompt, and output alongside each candidate record. Connect AI outputs to ATS stages only after the prompt is stable, reviewed, and documented. Keep candidate-facing sends behind a human gate until error rate is boringly low.

- How to get started: Run a side-by-side on closed roles: compare AI shortlists to who you actually hired. Gaps show what the model misses before live candidates are affected. Run a baseline AI bias audit before scaling any screening automation.

- What to watch for: Vendors that retrain shared models on your candidate data, opaque scoring tools with no model version disclosure, and prompts baked into workflow automation flows nobody updates when the role brief or policy changes.

Where we talk about this

On AI with Michal live sessions, AI-based recruiting comes up in both tracks: the AI in recruiting block covers design logic, prompt stability, and the recruiter-facing steps; the sourcing automation block goes deeper on the technical layer where ATS webhooks, prompt chains, and data routing make AI the operational default rather than a tab you open manually. If you want the full room conversation with peer critique of your actual stack, start at Workshops and bring a real role brief and your ATS name.

Around the web (opinions and rabbit holes)

Third-party creators move fast here. Treat these as starting points, not endorsements, and verify compliance postures and vendor claims directly before wiring candidate data.

YouTube

- AI in Recruiting: What Talent Teams Need to Know gives a practitioner overview of where AI fits across the hiring funnel, useful for grounding expectations before a vendor demo.

- Introduction to Generative AI (Google Cloud Tech) gives the language-model foundation useful when evaluating how any AI recruiting tool actually works under the hood.

- AI Bias and Fairness Explained (IBM Technology) covers the algorithmic fairness concepts that underpin AI bias audits in hiring contexts, relevant before any screening automation goes live.

- How are you actually using AI in your recruiting workflow right now? in r/recruiting is a candid survey of tools and use cases from practitioners doing this week to week.

- AI tools for recruiting: 6 months in, what worked and what did not in r/recruiting is honest about failure modes that vendor demos rarely show.

- Has AI made recruiting easier or just different? in r/Recruitment surfaces both efficiency gains and the process design challenges that AI-based workflows introduce.

Quora

- How is artificial intelligence changing the recruitment process? collects varied practitioner perspectives across sourcing, screening, and scheduling, with a wide enough range to calibrate your own team's assumptions.

AI-based versus AI-assisted recruiting

| Dimension | AI-assisted | AI-based |

|---|---|---|

| Design starting point | Existing manual process, AI added where useful | Workflow designed around what AI does at volume |

| Default mode | Recruiter initiates, AI supports on request | AI runs first, recruiter reviews output |

| Error ownership | Ad hoc | Named owner, defined threshold, runbook |

| Bias checks | Optional | Required before scaling any screening step |

| Maturity level | Rungs 1-2 on the AI adoption ladder | Rungs 3-4 |

Related on this site

- Glossary: AI in recruiting, AI adoption ladder, Human-in-the-loop, Workflow automation, AI bias audit, Scorecard, Recruiter AI

- Blog: AI sourcing tools for recruiters

- Guides: Sourcers

- Workshops: AI in recruiting

- Courses: Starting with AI: the foundations in recruiting

- Membership: Become a member