Best recruitment platform

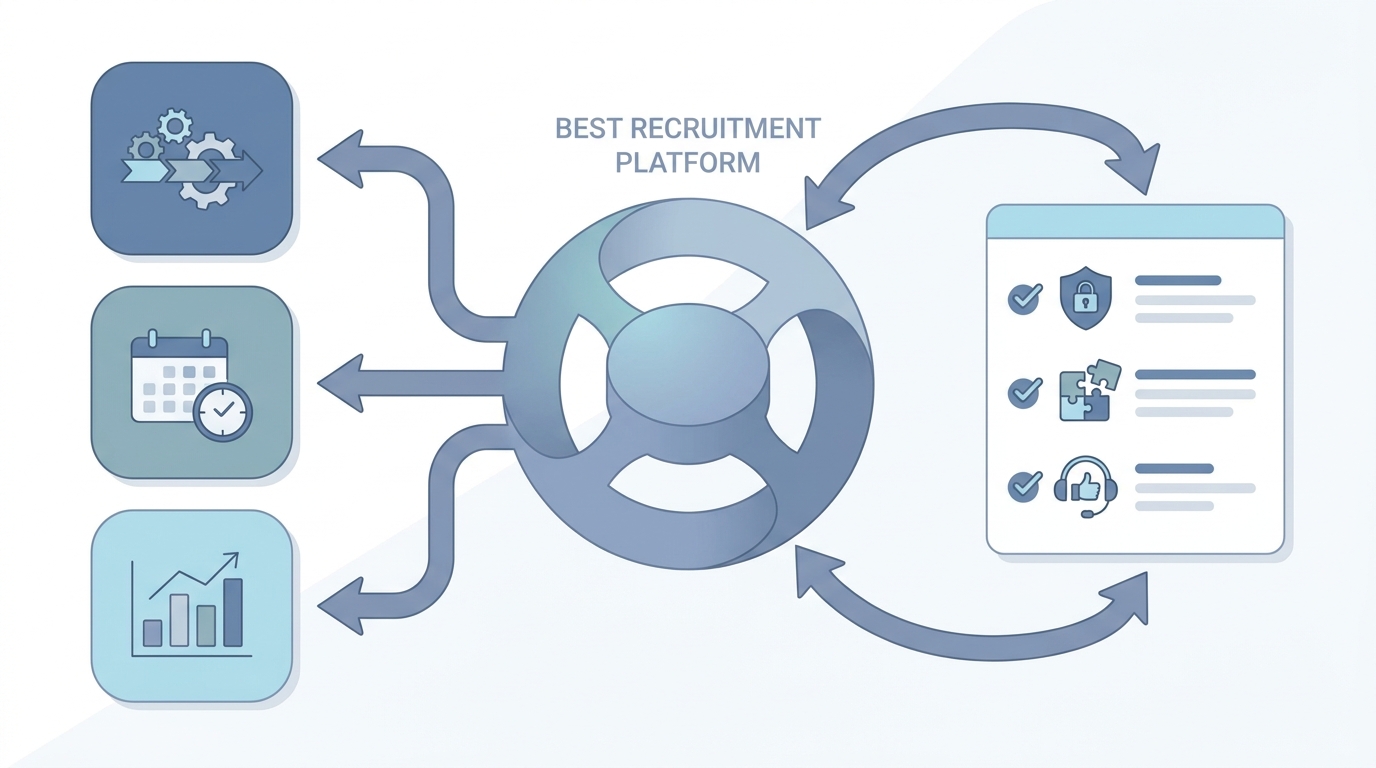

The hiring stack your team actually runs in production: ATS core, integrations, reporting, and governance, chosen after realistic demos and security review rather than slide decks alone.

Michal Juhas · Last reviewed May 3, 2026

What is the best recruitment platform?

There is no universal winner. The best recruitment platform is the one your recruiters can run without heroic spreadsheets, your integrations keep clean identities, and your security partners sleep at night. Buyers compare ATS cores, career site CMS, CRM layers, and analytics, then judge how honestly each vendor handles edge cases you already hit.

In practice

- TA ops says "we are on Workday Recruiting" or "Greenhouse plus a CRM bolt-on" when they mean the whole hiring stack, not a single tab.

- Finance asks "which platform owns the headcount" when approvals and budgets sit in HRIS while reqs live in ATS.

- Vendors pitch "best recruitment platform" in RFPs; practitioners translate that to uptime, dedupe rules, and who answers the phone at 9 p.m. on launch weekend.

Quick read, then how hiring teams use it

This is for recruiters, sourcers, TA, and HR partners who need the same vocabulary in debriefs, vendor calls, and policy reviews. Skim the first section when you need a fast shared picture. Use the second when you are deciding how it shows up in the ATS, sourcing tools, or candidate communications.

Plain-language summary

- What it means for you: It is the main hiring software bundle your team lives in daily, plus the integrations that keep candidate data consistent.

- How you would use it: You compare vendors against your hardest workflows, not the happiest demo path.

- How to get started: Write down five moments last month when the current stack failed. Turn those into demo scripts every finalist must pass.

- When it is a good time: When renewals approach, when duplicate rows or GDPR questions spike, or when new AI modules need a trustworthy core.

When you are running live reqs and tools

- What it means for you: Platform decisions set guardrails for structured output, webhooks, and reporting. Weak cores leak into every downstream tool.

- When it is a good time: Before you sign a multiyear contract, after a failed integration audit, or when hiring managers stop trusting funnel metrics.

- How to use it: Run parallel exports, involve security and finance early, and keep a single scorecard owners update weekly during trials.

- How to get started: Freeze net-new shadow IT integrations for ninety days while you document what already moves data. Then map each to supported APIs.

- What to watch for: Checkbox AI, opaque pricing tiers, and sales engineers who cannot show error budgets or rollback paths.

Where we talk about this

AI in recruiting and sourcing automation workshops spend time on realistic vendor demos, data mapping, and when to walk away from shiny roadmaps. Bring your integration list to Workshops so peers can stress-test it.

Around the web (opinions and rabbit holes)

Third-party creators move fast. Treat these as starting points, not endorsements, and double-check anything before you wire candidate data.

YouTube

- Search "how to choose an ATS" for buyer walkthroughs that show admin settings, not only marketing slides.

- Search "ATS demo script recruiting" for question lists hiring teams use during trials.

- r/recruiting and r/HRIS host migration threads; verify dates before trusting vendor comparisons.

Quora

- Search "ATS selection criteria enterprise" for mixed answers; treat as conversation starters, not checklists.