AI in hiring

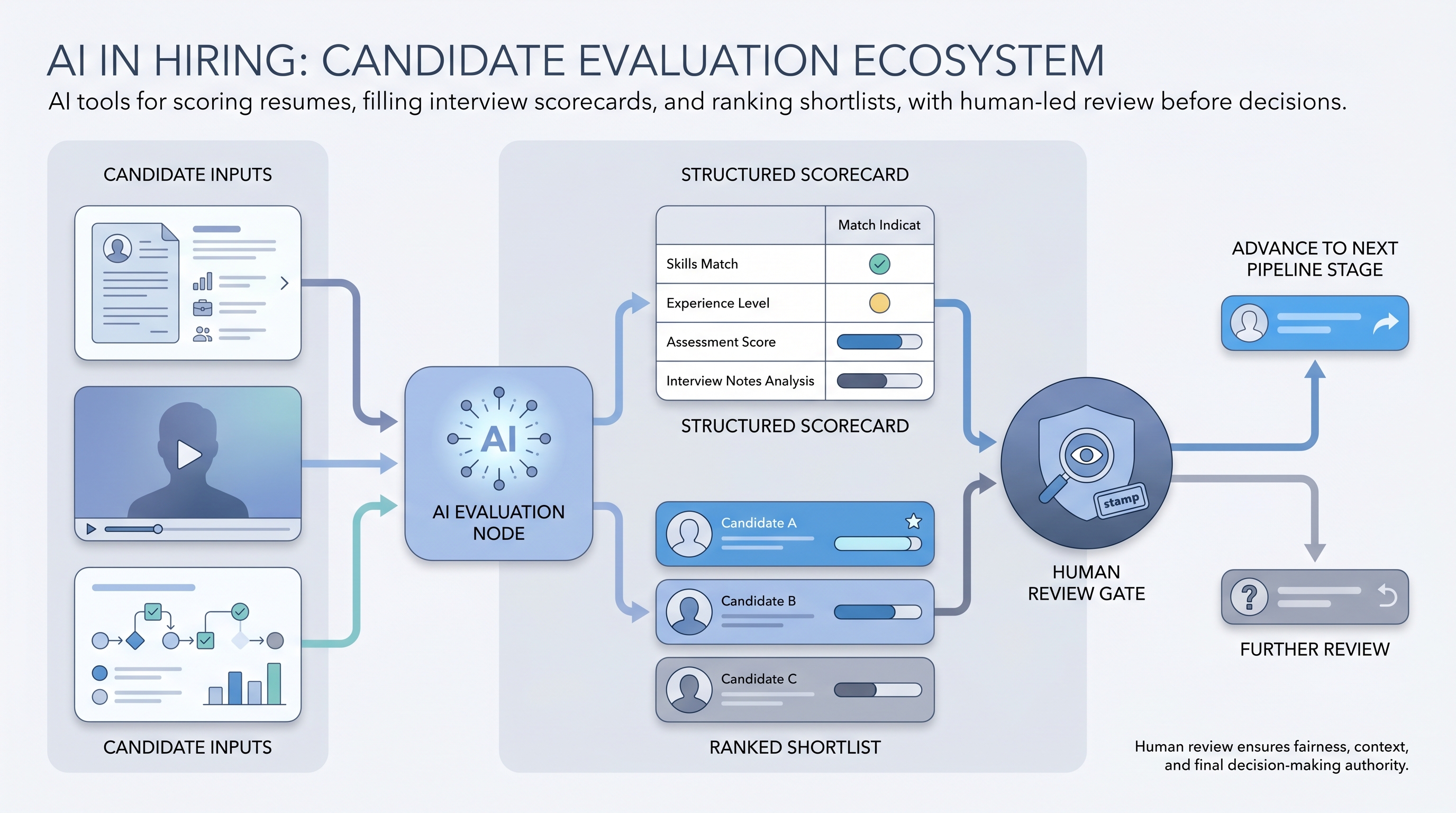

Applying machine learning and language models to the candidate evaluation and selection phase: screening resumes, scoring assessments, analysing video interviews, and recommending shortlists, so hiring decisions move faster and rest on structured criteria rather than gut feel alone.

Michal Juhas · Last reviewed May 4, 2026

What is AI in hiring?

AI in hiring is the use of machine learning and language models specifically at the candidate evaluation and selection stage: screening resumes against job criteria, scoring assessment responses, analysing structured interview notes, and generating shortlist recommendations so decisions rest on documented criteria rather than unexamined intuition.

The scope is narrower than AI in recruiting, which covers the full talent acquisition cycle. AI in hiring sits at the moment a candidate is assessed, advanced, or rejected, which makes it the highest-stakes layer for compliance, auditability, and bias risk.

In practice

- A recruiting coordinator running first-round screens uses an AI tool to fill a structured scorecard from a 20-minute video submission, then reviews the output before advancing the candidate. The AI does the note-taking; the human owns the decision.

- A TA lead telling the panel "the AI flagged a skills gap on criteria three" means a resume screening tool surfaced an absence of a required qualification, which the panel then validated before deciding whether to advance or screen out.

- When a candidate asks "why was my application not progressed?" and the answer is "our tool scored you lower," that is a compliance gap in most European jurisdictions. The real answer must name the criteria, the evidence, and the human who confirmed the decision.

Quick read, then how hiring teams use it

This is for recruiters, sourcers, TA, and HR partners who need the same vocabulary in debriefs, vendor calls, and policy reviews. Skim the first section when you need a fast shared picture. Use the second when you are deciding which stage to instrument first and what review gates to build.

Plain-language summary

- What it means for you: AI tools can fill scorecards, flag missing criteria, and rank resumes so you spend panel time on genuine judgment calls rather than data extraction from documents.

- How you would use it: Pick one evaluation step that is high-volume and pattern-driven, run AI against closed-role samples to measure accuracy, then instrument it with a human review gate before outputs affect live candidates.

- How to get started: Start with interview briefing documents or scorecard templates that recruiters edit before the panel sees them. Keep the AI advisory for at least one full hire cycle before linking it to advance or reject decisions.

- When it is a good time: After your hiring criteria are stable enough to express as a scorecard and your team has read the vendor disclosure on training data and model version.

When you are running live reqs and tools

- What it means for you: AI-assisted hiring decisions require a decision log, a named reviewer, and a candidate disclosure. These are not paperwork; they are the difference between a defensible process and a bias complaint with no traceable record.

- When it is a good time: Before a high-volume campaign or after a bottleneck in screening speed that adding headcount cannot fix. Not while criteria are still changing every sprint.

- How to use it: Log tool name, model version, input, output, and reviewer per candidate per stage. Keep outputs advisory rather than deterministic. Pair with a human-in-the-loop gate before any advance or reject is written to the ATS.

- How to get started: Pilot on ten closed roles. Compare AI recommendations to actual outcomes and calibrate before live use. Run an AI bias audit on the pilot output before scaling.

- What to watch for: Vendors that retrain on your candidate data without an opt-out, model updates that shift scoring without notification, false-precision scores presented as pass/fail thresholds, and audit trails stored in spreadsheets that get deleted after 90 days.

Where we talk about this

On AI with Michal live sessions, AI in recruiting workshops cover the full hiring cycle including how AI evaluation tools fit into compliant workflows. Sourcing automation blocks address the upstream data layer that feeds hiring-stage tools. If you want a live room conversation on audit design and vendor evaluation rather than a static page, join Workshops and bring your current screening process as a one-pager.

Around the web (opinions and rabbit holes)

Third-party creators move fast here. Treat these as starting points, not endorsements, and verify compliance postures directly before wiring candidate data to any vendor tool.

YouTube

- AI in Hiring: Bias, Fairness, and Compliance covers the regulatory landscape that any TA team evaluating hiring-stage AI tools needs to understand before a pilot.

- How AI Resume Screening Works walks the mechanics of matching algorithms so you can ask better questions when a vendor demos a screening product.

- AI Bias and Fairness Explained (IBM Technology) covers algorithmic fairness concepts that underpin AI bias audits in hiring contexts.

- Is AI screening actually fair? in r/recruiting is a candid thread on what practitioners have seen in live deployments rather than vendor demos.

- How do you explain AI-assisted decisions to candidates? in r/Recruitment covers the disclosure conversation that most teams are unprepared for before the first complaint arrives.

- AI hiring tools: six months in, honest review in r/recruiting covers practical failure modes that vendor roadmaps do not mention.

Quora

- How does AI affect the fairness of hiring decisions? collects practitioner and academic perspectives on bias, explainability, and regulatory risk in AI-assisted selection.

AI in hiring across the evaluation funnel

| Stage | Typical AI use | Human gate |

|---|---|---|

| Resume screening | Flag criteria matches and gaps | Recruiter confirms before advance |

| Async video | Transcribe and score structured responses | Recruiter reviews before panel invite |

| Assessment scoring | Rank by performance percentile | TA lead validates cutoffs |

| Scorecard completion | Fill from interview notes | Interviewer edits before submission |

Related on this site

- Glossary: AI in recruiting, AI bias audit, Scorecard, Async screening, Human-in-the-loop, AI adoption ladder, One-way video interview

- Blog: AI sourcing tools for recruiters

- Guides: Sourcers

- Courses: Starting with AI: the foundations in recruiting

- Live cohort: Workshops

- Membership: Become a member