AI hiring tools

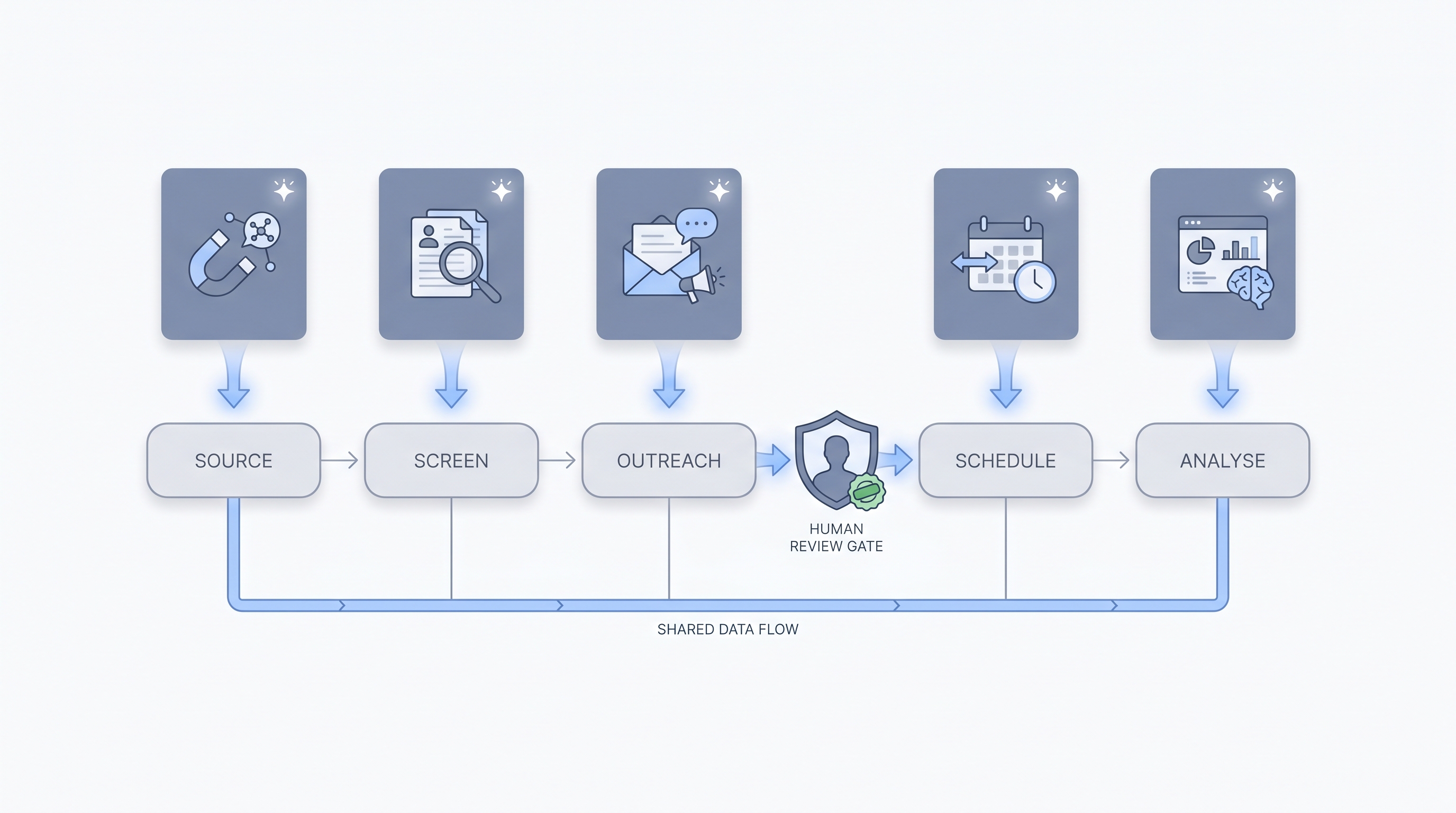

Software applications that use artificial intelligence to automate or augment specific tasks in the hiring process, including sourcing candidates, screening resumes, drafting outreach, scheduling interviews, and analysing pipeline data.

Michal Juhas · Last reviewed May 4, 2026

What are AI hiring tools?

AI hiring tools are software applications that use machine learning or language models to automate or augment a specific task in the recruiting process. The category is broad: it covers everything from a language model that drafts outreach messages to a sourcing tool that surfaces passive candidates via semantic search, a scheduling tool that coordinates availability without email chains, and an interview intelligence tool that structures call notes automatically.

What ties them together is AI doing something that previously required manual recruiter effort at each step, whether that is reading and ranking 200 resumes, personalising 50 outreach messages, or turning a 40-minute interview transcript into a five-bullet summary.

In practice

- When a recruiter uses ChatGPT to draft a job description first cut and edits the best version before pasting it into their ATS, that is the simplest form of an AI hiring tool: one prompt, no integration, immediate time saving.

- When a TA ops team connects a sourcing platform to their ATS so that candidate profiles sourced via AI-ranked search land in the ATS with source attribution and a pre-filled scorecard summary, that is an AI hiring tool working as part of an integrated stack.

- When a sourcer says "the AI shortlist came back and I had to remove 30 percent because the model did not understand the seniority signal," that is a normal calibration conversation about an AI sourcing tool, not a product failure. Human review of AI output is the expected pattern, not the exception.

Quick read, then how hiring teams use it

This section is for recruiters, sourcers, TA managers, and HR ops practitioners who need shared vocabulary for evaluating, buying, or defending AI tool decisions. Skim the first part for a shared definition. Read the second when you are deciding what to trial, connect, or sunset.

Plain-language summary

- What it means for you: AI hiring tools are software that uses machine learning to help your team work faster at specific tasks: drafting, searching, summarising, scheduling, or predicting. The "AI" label covers everything from a simple classifier to a large language model, so ask which one the vendor uses.

- How you would use it: You pick one task where you lose significant time each week, trial a tool for that task, and review its output before it changes anything in your ATS or goes to a candidate.

- How to get started: Name your highest-friction task (not the most impressive category). Try one tool for it. Run the output alongside your manual work for two weeks. Compare quality before you add a second tool.

- When it is a good time: After you know what good output looks like for the task the tool is solving. Not while the task or the process is still changing.

When you are running live reqs and tools

- What it means for you: AI hiring tools change which tasks get recruiter attention. They handle first drafts, profile ranking, and note structuring; recruiters spend time on calibration, candidate relationships, and judgment calls the model cannot make. That trade-off only holds if outputs are reviewed before they touch candidate records or go to candidates.

- When it is a good time: After you have a human-in-the-loop review step defined for each tool's output, compliance sign-off on any tool that influences a pass-or-fail decision, and a named owner for credentials and error monitoring.

- How to use it: Connect AI hiring tools to your ATS with field-mapped integrations. Keep candidate-facing sends and ATS status changes behind a review gate. Log model versions quarterly so you can answer bias and compliance questions with data.

- How to get started: Run one tool through a 30-day trial with three live roles. Score on candidate quality, output accuracy, and security posture. Involve IT in the security questionnaire before extending access.

- What to watch for: Confident wrong output (especially in resume parsing), bias in AI-ranked shortlists, candidates whose data is processed by a vendor whose DPA terms you have not reviewed, and features that skip the review queue and write directly to candidate records.

Where we talk about this

On AI with Michal workshops, AI hiring tools are tested live on real role briefs, not in vendor demos. The AI in recruiting track covers tool selection across the full funnel. The sourcing automation track focuses on sourcing and outreach tools with integration and compliance context. If you want peer comparison on a specific tool shortlist, start at Workshops and bring the tools you are evaluating alongside your actual ATS and compliance constraints.

Around the web (opinions and rabbit holes)

Treat these as starting points, not endorsements. AI hiring tool categories, features, and pricing change rapidly. Verify vendor details directly before connecting any tool to live candidate data.

YouTube

- AI in Recruiting: What Talent Teams Need to Know covers the TA-specific context for evaluating AI hiring tools across the funnel.

- AI Bias and Fairness Explained (IBM Technology) covers the fairness concepts that apply whenever an AI tool scores or ranks candidates.

- Introduction to Generative AI (Google Cloud Tech) explains the model layer behind most generative AI hiring features, useful for pressure-testing vendor claims.

- AI tools for recruiting: 6 months in, what worked and what did not in r/recruiting is honest about which tool categories paid off and which did not.

- How are you actually using AI in your recruiting workflow right now? in r/recruiting is a broad survey of tools in active use.

- Best AI sourcing tools you actually use? in r/recruiting collects practitioner recommendations with real production context.

Quora

- What are the best AI tools for recruiting and hiring? has wide-ranging recommendations with varying levels of real-world grounding.

AI hiring tools by stage

| Stage | Tool category | What it does |

|---|---|---|

| Sourcing | Semantic search, signal-based ranking | Surfaces passive candidates matching a brief |

| Screening | Resume parsing, scorecard fill | Summarises and qualifies applicant pool |

| Outreach | Personalised message drafting | Writes and sequences candidate messages |

| Scheduling | Availability coordination | Removes email back-and-forth from interview setup |

| Interviews | Transcription, note structuring | Turns recordings into structured summaries |

| Analytics | Pipeline intelligence | Flags drop-off and tracks source quality |

Related on this site

- Glossary: AI recruiting tools, AI hiring software, AI hiring platform, AI in recruiting, Human-in-the-loop, AI bias audit, Semantic search, Resume parsing, No-code recruiting automation

- Blog: AI sourcing tools for recruiters

- Guides: Sourcers

- Workshops: AI in recruiting

- Courses: Starting with AI: the foundations in recruiting

- Membership: Become a member