AI tools for recruitment

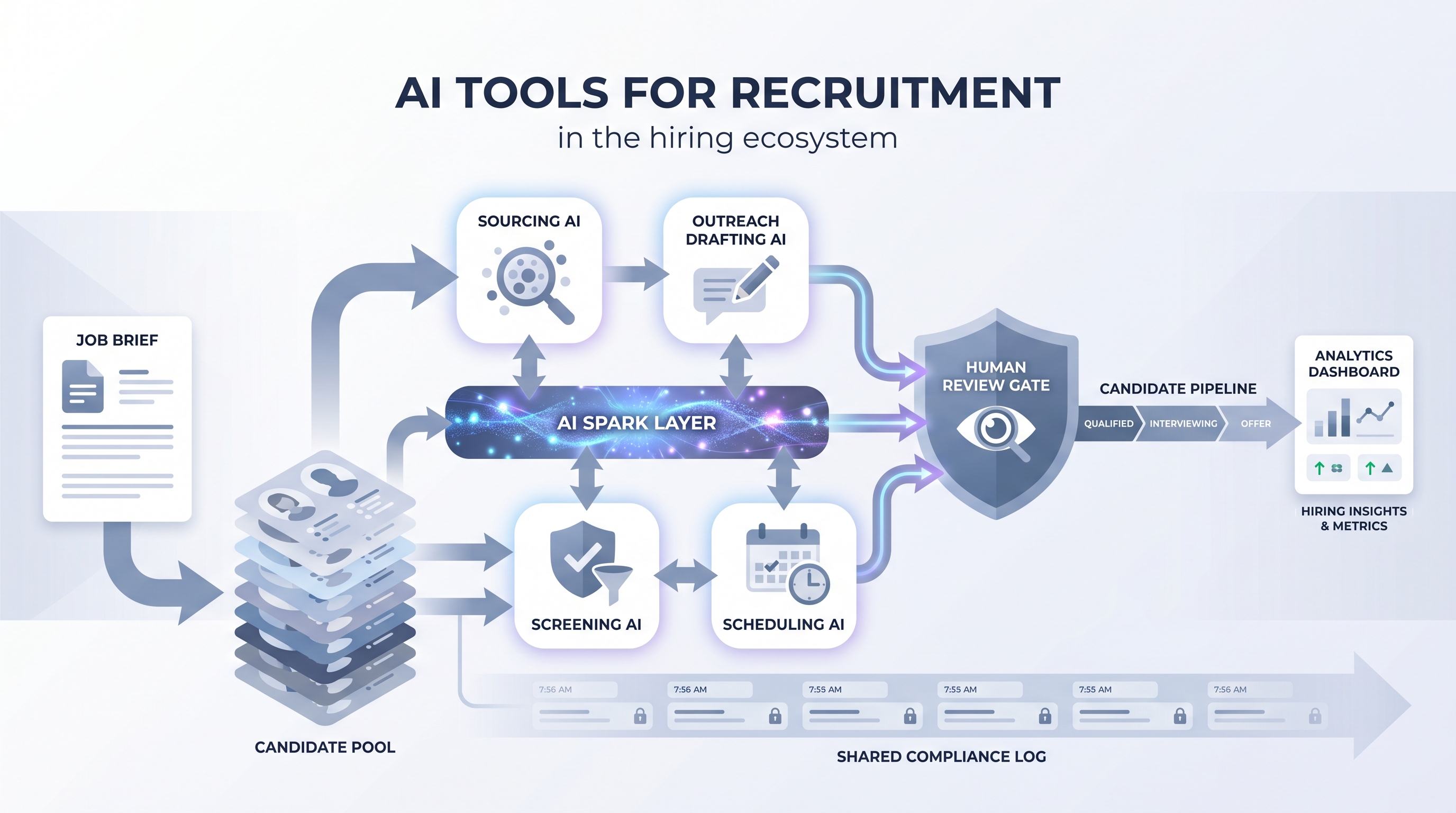

The broad category of software that uses machine learning or large language models to support or automate recruiting tasks - from sourcing and outreach drafting to resume screening, interview scheduling, and pipeline analytics.

Michal Juhas · Last reviewed May 4, 2026

What are AI tools for recruitment?

AI tools for recruitment is the broad category covering any software that uses machine learning or large language models to assist or automate parts of the hiring process. The term spans everything from sourcing platforms that surface passive candidates to resume screening engines that score fit before a recruiter reads the file, outreach assistants that personalize messages at scale, and analytics copilots that flag stale pipeline stages.

The distinguishing feature is that these tools make recommendations or take actions based on patterns in language and data, not just routing rules. That changes both the leverage they offer and the accountability structure when something goes wrong.

In practice

- A recruiter who says "the AI drafted 50 outreach messages and I edited 12 of them before sending" is using an AI recruitment tool the way it works best: high-volume draft, human judgment on send.

- When a TA lead asks "did the AI screen out this candidate or did we?" they are hitting the accountability gap that surfaces in every team that adds screening AI without logging which model version ran and who reviewed the output.

- Running a four-week parallel test, AI tool recommendations alongside manual recruiter decisions on the same role, is the standard calibration method before committing to a tool at full deployment.

Quick read, then how hiring teams use it

This is for recruiters, sourcers, TA leads, and HRBPs who need to speak the same language when evaluating vendors, configuring tools, or explaining AI-assisted decisions to candidates and compliance teams. Skim the first section for a fast picture. Use the second when you are running live reqs.

Plain-language summary

- What it means for you: AI recruitment tools handle the high-volume repetitive steps, sourcing, screening, drafting, so you spend more time on decisions that need judgment and less on ones that only need pattern recognition.

- How you would use it: Pick one stage costing the most time per week and ask whether an AI tool could produce a first draft or a shortlist for you to review rather than build from scratch.

- How to get started: Audit which stage costs the most recruiter hours per open req. If it is sourcing or CV review, those are the strongest starting points. One tool, one role type, four weeks of parallel running alongside your current process.

- When it is a good time: When your volume of applications or sourcing targets has grown past what the team can review at the quality level you want to maintain.

When you are running live reqs and tools

- What it means for you: Every AI recommendation in your hiring funnel is a decision with a paper trail obligation: which model, which prompt, which version, who reviewed, who advanced or rejected.

- When it is a good time: Before adding any AI tool to early-funnel steps at volume, when bias risk, GDPR automated decision rules, and data residency requirements all converge.

- How to use it: Log model versions and output scores alongside candidate records. Keep a human-in-the-loop gate between any AI recommendation and a candidate-affecting action. Run an AI bias audit on any screening or ranking tool before high-volume deployment.

- How to get started: Map every AI tool in your current stack. For each: who owns it, where candidate PII goes, and whether anyone reviewed the bias and accuracy profile before it went live. Most teams find at least one tool nobody audited after the first demo.

- What to watch for: Vendors that rebadge existing tools as AI-powered without disclosing the underlying model. AI recommendations copy-pasted to candidate decisions without human review. Scoring outputs that shift after a model update the vendor did not announce.

Where we talk about this

On AI with Michal live sessions AI tools for recruitment come up across both main tracks. The AI in recruiting track covers tool evaluation, AI feature claims, and where human-in-the-loop gates belong in a real stack. The sourcing automation track goes deeper on how tools hand off data, which integrations break under real load, and what to audit before a vendor goes live on high-volume reqs. Bring your current tool list and your biggest friction point to Workshops for a room-tested conversation with practitioners running similar stacks.

Around the web (opinions and rabbit holes)

Third-party creators cover AI recruitment tools at high speed and mixed depth. These are starting points, not endorsements. Verify compliance postures and integration claims directly with vendors before purchase.

YouTube

- AI tools for recruiters 2025 shows practitioner walkthroughs of live tool setups with honest observations on what held up under real volume.

- Best AI recruitment tools compared includes head-to-head evaluations by TA leads who ran trials on real role types.

- How to evaluate AI recruitment software covers the compliance and bias evaluation process alongside feature comparisons.

- What AI tools are you using in recruiting? in r/recruiting collects candid in-production reports from practitioners across company sizes.

- AI recruitment tools that actually work in r/recruiting separates vendor claims from what survives production volume and renewals.

- Has anyone used AI for screening? in r/recruiting surfaces failure stories alongside tools that held up under real intake load.

Quora

- What are the best AI tools for recruitment? gathers practitioner recommendations with varying context; cross-reference with recent Reddit threads and LinkedIn posts from practitioners in your industry.

AI recruitment tools by funnel stage

| Funnel stage | AI tool category | What to log |

|---|---|---|

| Sourcing | Semantic search, profile ranking | Query used, profiles surfaced, model version |

| Outreach | Drafting assistants | Prompt template, edit rate, human approval |

| Screening | CV parsing, scoring AI | Score per candidate, model version, reviewer |

| Interview | Transcription, async video | Consent recorded, summary accuracy, reviewer |

| Pipeline | Copilot nudges, analytics | Nudge trigger, action taken, outcome |

Related on this site

- Glossary: AI recruiting tools, AI recruitment platform, AI sourcing tools, Applicant tracking software, Semantic search, Resume parsing, AI bias audit, Human-in-the-loop, Async screening, Workflow automation

- Blog: AI sourcing tools for recruiters

- Guides: Sourcers

- Workshops: AI in recruiting

- Membership: Become a member

- Courses: Starting with AI: the foundations in recruiting