Best applicant tracking software

The ATS that fits your actual workflows, data model, and compliance posture: evaluated against the edge cases your team hits every week, not only the happiest vendor demo path.

Michal Juhas · Last reviewed May 4, 2026

What is the best applicant tracking software?

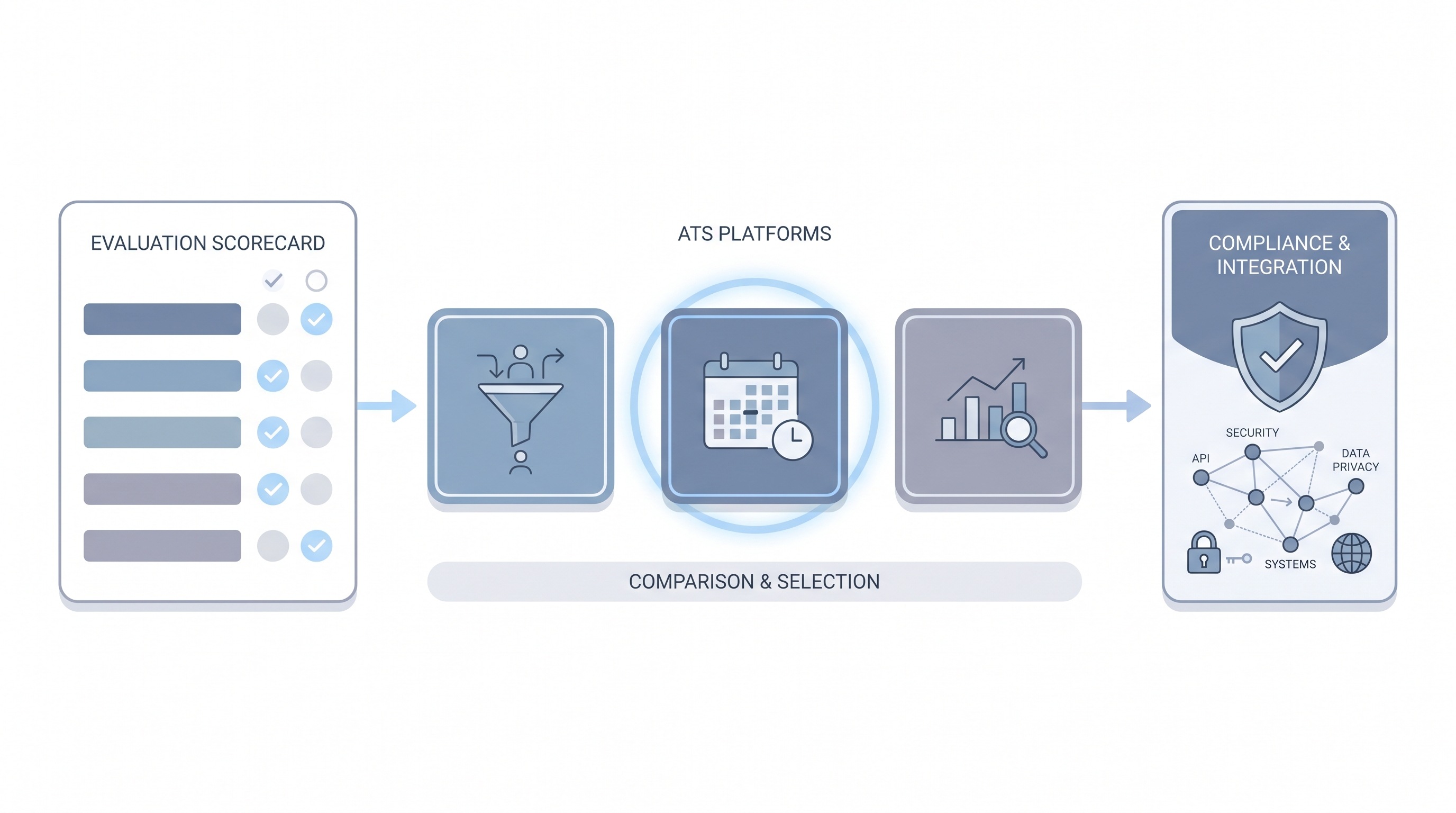

There is no universal winner. The best applicant tracking software is the one your recruiters can actually run without heroic spreadsheets, your integrations keep candidate identities clean, and your security and legal partners can audit. Buyers compare ATS cores, career site modules, sourcing CRM layers, and AI features, then judge how honestly each vendor handles the edge cases the team already hits in production.

The word "best" signals a buying intent: someone evaluating a shortlist, renewing a contract, or migrating from a tool that stopped fitting the way the team works. This page focuses on evaluation criteria, not vendor rankings, because platform fit depends on your req volume, integration stack, and compliance posture.

In practice

- A TA ops manager says "we are evaluating whether our current platform still fits" when req volume doubled and the stage logic no longer reflects how the team actually works.

- A recruiter says "the best ATS is the one I actually use" when asked to justify a switch: adoption beats features when the evaluation is about day-to-day speed, not quarterly reports.

- An HRBP describes a compliance gap when she says "nobody enforces the retention settings we configured three years ago," a common signal that the platform has drifted from the policy team's expectations.

Quick read, then how hiring teams use it

This is for recruiters, TA leads, TA ops, and HR partners evaluating platforms, renewing contracts, or migrating from a tool that no longer fits. Skim the first section for shared vocabulary. Use the second when making the actual decision.

Plain-language summary

- What it means for you: "Best ATS" is always relative to your workflows, your req volume, and your team's willingness to maintain configuration. No vendor earns the label across all contexts.

- How you would use it: Build a demo script from real workflows your team runs every week, not the scenario the sales rep wants to show. Ask each finalist to walk through your hardest edge case.

- How to get started: List five moments in the last month where your current ATS failed your team. Turn each failure into a test every shortlisted vendor must pass before a second meeting.

- When it is a good time: When contract renewals approach, when integration alerts are mounting, when AI features require a cleaner data foundation than what you have, or when compliance questions take longer to answer than they should.

When you are running live reqs and tools

- What it means for you: Platform selection sets guardrails for every downstream tool: sourcing automations, AI scoring, diversity reporting, and workflow automation all inherit the ATS data model and field quality.

- When it is a good time: Before you sign a multiyear contract, after a failed integration audit, or when hiring managers stop trusting the pipeline metrics the ATS produces.

- How to use it: Run parallel historical exports and replay queries on trial tenants. Involve security, legal, and finance before the final demo rather than after. Keep one shared scorecard all evaluators update weekly.

- How to get started: Freeze net-new shadow IT integrations for sixty days while you document what already moves candidate data. Map each integration to a supported API and flag any CSV bridge as a migration risk.

- What to watch for: AI modules marketed as features but untestable in trials, opaque pricing tiers that add per-user costs after go-live, and sales engineers who cannot show error budgets or rollback paths.

Where we talk about this

AI in recruiting workshops cover ATS evaluation as part of the broader stack conversation: how to script a realistic demo, what questions to bring to legal, and which AI features are ready for production versus still experimental. Sourcing automation sessions go deeper on the integration layer. Bring your vendor shortlist to Workshops so peers who have already migrated can stress-test your assumptions before you sign.

Around the web (opinions and rabbit holes)

Third-party creators move fast in this space. Treat these as starting points, not endorsements. Verify vendor capabilities and compliance postures directly before connecting candidate data.

YouTube

- How to choose an ATS + recruiting for buyer walkthroughs that show admin settings and configuration depth, not only marketing slides.

- ATS comparison + recruiters for practitioner-led demos across company sizes, including smaller teams that rarely appear in enterprise analyst reports.

- Switching ATS + lessons learned recruiting for migration stories that cover data mapping decisions and what teams wish they had known before signing.

- What ATS does your company use? in r/recruiting collects candid recruiter experiences with major platforms across company sizes.

- ATS data quality -- anyone else have this problem? surfaces the field completion and stage hygiene issues that vendor demos do not address.

- Switching ATS -- what did you wish you knew? in r/RecruitmentAgencies covers migration pain points and data-mapping decisions from practitioners who have gone through it.

Quora

- What is the best applicant tracking system for a small team? collects practitioner opinions across budget ranges; cross-reference with the Reddit threads above for balance.

ATS evaluation criteria at a glance

| Category | What to test in the demo |

|---|---|

| Core pipeline | Stage logic, req lifecycle, offer workflow |

| Integration depth | Webhook reliability, API versioning, error handling |

| AI readiness | Parsing accuracy, scoring explainability, bias audit support |

| Compliance | Data residency, retention controls, subprocessor list |

| Support and migration | Rollback paths, data export, SLA for critical incidents |

Related on this site

- Glossary: Applicant tracking software, Best recruitment platform, Resume parsing, Workflow automation, Human-in-the-loop, AI bias audit, Candidate data enrichment, Talent acquisition metrics

- Blog: AI sourcing tools for recruiters

- Guides: Sourcers

- Workshops: AI in recruiting

- Course: Starting with AI: the foundations in recruiting

- Membership: Become a member